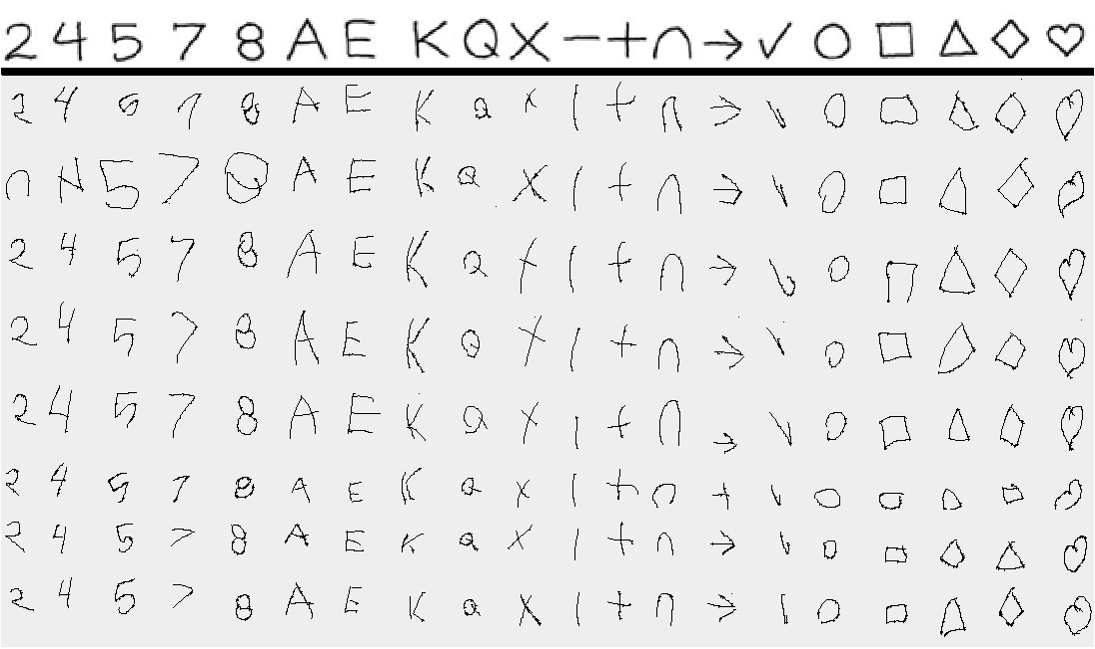

We are investigating how children create touchscreen gestures in order to improve recognition rates. This has been done in previous work with tools like GREAT and GHoST, but has largely focused on adults’ gestures. Our prior work has demonstrated that children are less consistent than adults when making gestures, leading to lower recognition rates. We plan to develop new methods of characterizing children’s gestures which we can leverage into new, more effective recognition algorithms, as outlined by our study [Shaw et al, ICMI 2016]. We have shown that humans are able to recognize children’s gestures better than machine algorithms [Shaw et al, ICMI 2017], and we are working on improving machine algorithms to improve that gap.

The Team

Alex Shaw

Dr. Lisa Anthony

Dr. Jaime Ruiz

Understanding Gestures Publications

Refereed Conference Papers

1. Shaw, A., Ruiz, J., and Anthony, L. 2017. Comparing human and machine recognition of children’s touchscreen stroke gestures. In Proceedings of the 19th ACM International Conference on Multimodal Interaction (ICMI 2017). ACM, New York, USA, 32-40, Best Student Paper. [Pdf]

2. Shaw, A. and Anthony, L. 2016. Analyzing the articulation features of children’s touchscreen gestures. In Proceedings of the 18th ACM International Conference on Multimodal Interaction (ICMI 2016). ACM, New York, NY, USA, 333-340. Nominated for Best Student Paper. [Pdf]

Refereed Conference Posters

3. Shaw, A. and Anthony, L. 2016. Toward a Systematic Understanding of Children’s Touchscreen Gestures. Extended Abstracts of the ACM Conference on Human Factors in Computing Systems (CHI’2016) , San Jose, CA, 7 May 2016, p.1752-1759. [Pdf and Poster]

Theses

4. Shaw, A. 2020. Automatic Recognition of Children’s Touchscreen Stroke Gestures. Ph.D. thesis, Department of Computer and Information Science and Engineering, University of Florida. March 2020. [PDF]

Funding

This work is partially supported by National Science Foundation Grant Awards #IIS-1552598, #IIS-1218395 / IIS-1433228 and IIS-1218664. Any opinions, findings, and conclusions or recommendations expressed in this paper are those of the authors and do not necessarily reflect these agencies’ views.

Blogposts

- Two INIT Lab undergraduates complete successful senior theses! #latertweet

- #laterpub AVI’2020 paper published on Fitts’ Law for kids!

- New survey paper from INIT Lab graduate Alex Shaw, Ph.D.!

- Understanding Gestures Project: Paper on children’s cognitive development and touchscreen interactions published at ICMI 2020

- Understanding Gestures Project: My take-aways from running user studies with children ages 4 to 7

- INIT Lab PhD student Alex Shaw defends his dissertation!

- Understanding Gestures Project Update: Cognitive Development and Touchscreen Interaction Data Analysis

- Understanding Gestures Project: Getting started in the field of touchscreen gestures and preparing for running studies with young children

- Understanding Gestures Project: First Experience of Running User Studies with Young Children

- Understanding Gestures Project: Cognitive Development and Touchscreen Interaction in Younger Children